As foundation models continue to improve, AI coding tools are evolving from simple code-completion assistants into coding agents that can participate in the full software development lifecycle. Unlike traditional copilot-style tools, coding agents do more than generate code from prompts. They can read and navigate codebases, modify files, run commands, invoke external tools, and complete complex tasks through multi-step interaction. As this shift continues, developers need more than prompt-writing techniques. They need a reliable way to work with coding agents in practice. Across leading tools, a shared usage pattern is starting to emerge: provide clear task context, plan execution steps, capture project-level guidance, connect external tools and systems, and automate repetitive workflows so agents can collaborate effectively in real development environments over time. Drawing on official guidance from these tools, this article outlines a more general best practices framework for coding agents.Documentation Index

Fetch the complete documentation index at: https://docs.z.ai/llms.txt

Use this file to discover all available pages before exploring further.

1. Treat Coding Agents as a Collaborator, Not One-Off Assistants

A common mistake when using a coding agent is to treat it like a one-off question-and-answer tool:Ask a question, get a piece of code, and end the interaction.In practice, that approach does not make full use of what a coding agent can do. A coding agent is better understood as a configurable collaborator that can be refined over time. Through project-level guidance files, tool integrations, and reusable skills, developers can continuously shape the agent’s behavior so it becomes better aligned with the team’s development workflow.

2. Structure Task Inputs: Context Matters More Than Prompting Tricks

When working with a coding agent, many developers focus too much on prompt-writing techniques and not enough on what matters more: task context. In a complex codebase, an effective task description typically includes four elements:Goal

Goal

Clearly describe what needs to be built or changed, such as fixing a bug, implementing an endpoint, or refactoring a module.

Context

Context

Provide the relevant files, error messages, documentation, or examples. For example, specify which files, functions, or modules are involved.

Constraints

Constraints

List the engineering requirements the agent should follow, such as coding standards, architectural rules, security requirements, or dependency limitations.

Done when

Done when

Define how completion should be evaluated, such as tests passing, behavior changing as expected, or the bug no longer reproducing.

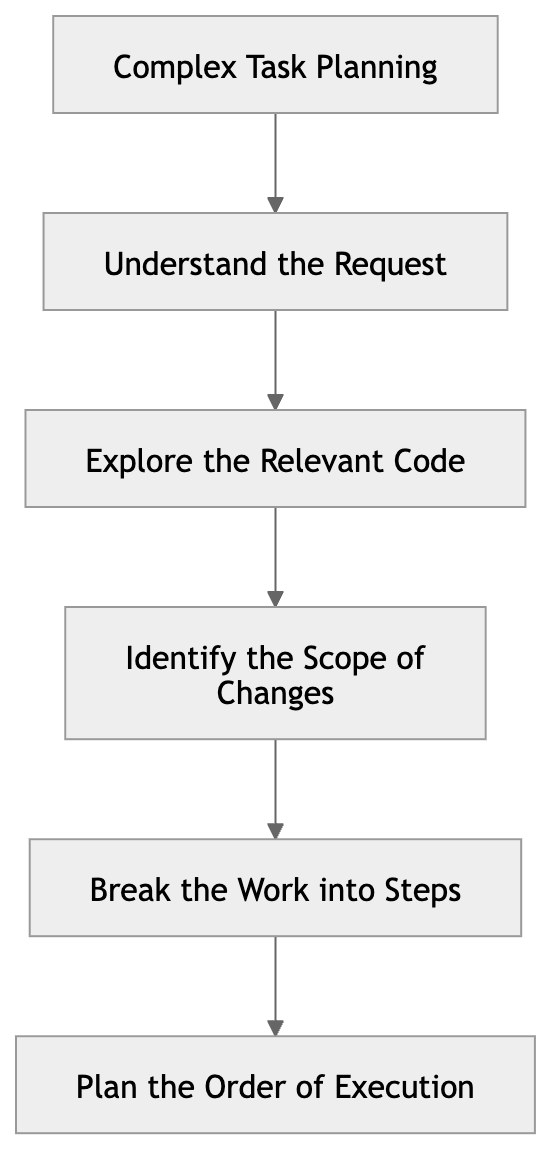

3. Plan Before Execution for Complex Tasks

Once a task has clear context, the next challenge is execution. For complex requests, coding agents are most effective when they plan before they act. For more complex requests, asking the agent to start writing code immediately often leads to logic errors, unnecessary rework, or repeated revisions. A more effective approach is to have the agent produce a plan first, then move into implementation. This planning phase typically includes:

4. Capture Repeated Rules in Project-Level Configuration Files

In practice, many prompts end up repeating the same project rules, such as:From a practical standpoint, this can be reduced to one simple rule: put temporary instructions in the prompt, and put long-lived rules in project-level configuration files.

5. The Execution Environment Defines What the Agent Can Do

When working with coding agents, developers often attribute inconsistent results to model capability. In practice, many of these issues are caused by an incomplete or poorly configured execution environment. Unlike traditional code-completion tools, coding agents are typically expected to operate in a real development environment and carry out tasks such as:At a higher level, a coding agent depends on three types of context:

- Task Context: the prompt and input for the current task

- Project Context: the repository structure and engineering rules

- Environment Context: the tools, permissions, and execution environment

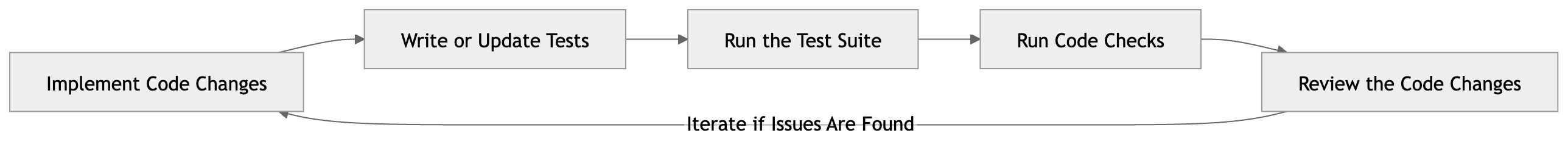

6. Involve Coding Agents in the Full Development Loop

Once a coding agent has the right execution environment, the next step is to involve it in the full development loop rather than using it for code generation alone. In real software development, a code change is rarely judged on generation alone. It also needs to pass tests, comply with engineering standards, and go through appropriate review. A more effective approach is to have the coding agent participate in the full development loop, rather than treating it as a tool for code generation only. A typical agent-driven development loop includes the following steps:

- Implement code changes Modify existing code or add new code based on the task requirements.

- Write or update tests Add test coverage for new functionality or for the bug being fixed.

- Run the test suite Execute unit or integration tests to verify that the changes behave as expected.

- Run code checks Run linting, formatting, or type-checking tools to ensure the changes meet engineering standards.

- Review the code changes Inspect the diff to identify potential issues, regression risks, or unintended modifications.

From a workflow perspective, this shifts the coding agent from a traditional code generator to an execution node within the development loop.

7. Extend Agent Context with MCP

In real development workflows, the information a coding agent needs does not always live inside the code repository. Much of the context that shapes implementation decisions is often spread across external systems, such as:From a workflow perspective, this is a meaningful shift. When an agent can only use the information provided in the prompt, it is usually limited to localized tasks. Once it can connect to external systems, it becomes capable of participating in more complete development workflows, such as reading issue context, investigating failed CI runs, checking API definitions, or analyzing problems against database schemas.

8. Capture Repeated Workflows as Skills

Over time, teams often find that certain tasks come up again and again when working with coding agents. Common examples include:If a prompt pattern or task flow is used repeatedly, it should probably be captured as a Skill.This shifts the use of coding agents from one-off, conversation-driven interaction toward more workflow-oriented task execution. As the skill library grows, agent behavior also becomes more consistent and predictable.

9. Automate Stable Workflows

Once a Skill can be executed reliably, the next step is often to automate it. In long-running development workflows, many tasks are inherently repetitive or time-based. For example:Automation can be understood as the next layer above Skills. A Skill defines how a workflow is executed, while Automation determines when that workflow runs and how it continues to operate over time.

10. Manage Agent Sessions Deliberately

When working with coding agents, a session is more than just a chat history. In practice, it functions as a working context that accumulates context, intermediate reasoning, and execution results over time. As a task progresses, the agent gradually builds up information within the same session, including:Use a separate session for each task

Use a separate session for each task

Avoid mixing unrelated tasks in the same session so the working context stays clear.

Avoid overly long sessions

Avoid overly long sessions

When a session accumulates too much history, use summaries or compression to reduce context overhead.

Start a new session for branch explorations

Start a new session for branch explorations

If the task opens up a new line of investigation, continue it in a separate session instead of piling more changes into the original one.

Periodically compress historical context

Periodically compress historical context

Summarize older parts of the conversation to reduce pressure on the context window.

Conclusion

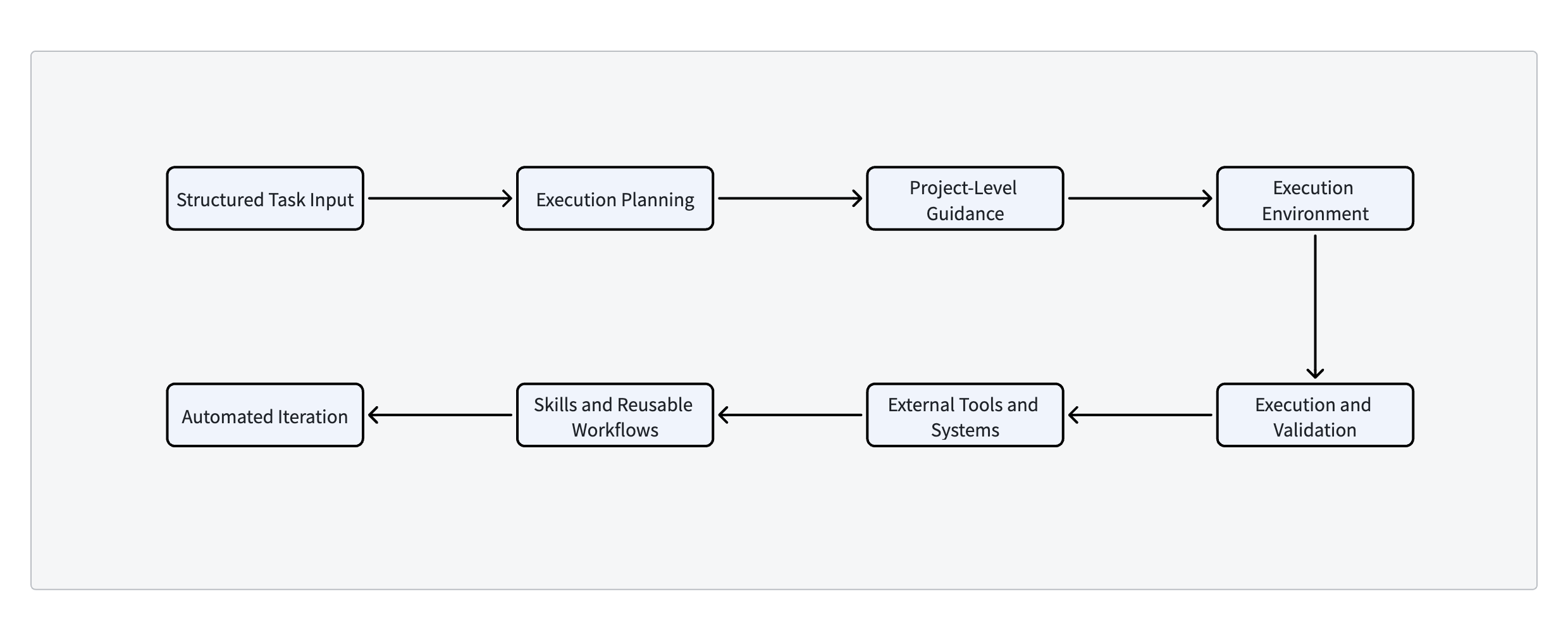

The effectiveness of a coding agent does not come from the model alone. It also depends on how developers structure the workflow around it. In practice, a mature coding agent workflow typically includes the following stages: Through this workflow, a coding agent can gradually evolve from a simple code generation tool into a collaborative system that participates across the full software development lifecycle.

Through this workflow, a coding agent can gradually evolve from a simple code generation tool into a collaborative system that participates across the full software development lifecycle.