Documentation Index

Fetch the complete documentation index at: https://docs.z.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

GLM-5-Turbo is a foundation model deeply optimized for the OpenClaw scenario. It has been specifically optimized for the core requirements of OpenClaw tasks since the training phase, enhancing key capabilities such as tool invocation, command following, timed and persistent tasks, and long-chain execution.Positioning

ClawBench Enhanced Model

Input Modalities

Text

Output Modalitie

Text

Context Length

200K

Maximum Output Tokens

128K

Capability

Thinking Mode

Offering multiple thinking modes for different scenarios

Streaming Output

Support real-time streaming responses to enhance user interaction experience

Function Call

Powerful tool invocation capabilities, enabling integration with various external toolsets

Context Caching

Intelligent caching mechanism to optimize performance in long conversations

Structured Output

Support for structured output formats like JSON, facilitating system integration

MCP

Flexibly integrate external MCP tools and data sources to expand use cases

Introducing GLM-5-Turbo

OpenClaw Native Model

From training data construction to the design of optimization objectives, we have systematically constructed a variety of OpenClaw tasks scenarios based on real-world agent workflows, ensuring that the model is truly capable of executing complex, dynamic, and long-chain tasks. We have significantly enhanced the following core capabilities:

- Tool Calling—Precise Invocation, No Failures: GLM-5-Turbo has strengthened its ability to invoke external tools and various skills, ensuring greater stability and reliability in multi-step tasks, thereby enabling OpenClaw tasks to transition from dialogue to execution.

- Instruction Following—Enhanced Decomposition of Complex Instructions: The model demonstrates stronger comprehension and decomposition capabilities for complex, multi-layered, and long-chain instructions. It can accurately identify objectives, plan steps, and support collaborative task division among multiple agents.

- Scheduled and Persistent Tasks — Better Understanding of Time Dimensions, Uninterrupted Long Tasks: Significantly optimized for scenarios involving scheduled triggers, continuous execution, and long-running tasks. It better understands time-related requirements and maintains execution continuity during complex, long-running tasks.

- High-Throughput Long Chains — Faster and More Stable Execution: For Lobster tasks involving high data throughput and long logical chains, GLM-5-Turbo further enhances execution efficiency and response stability, making it better suited for integration into real-world business workflows.

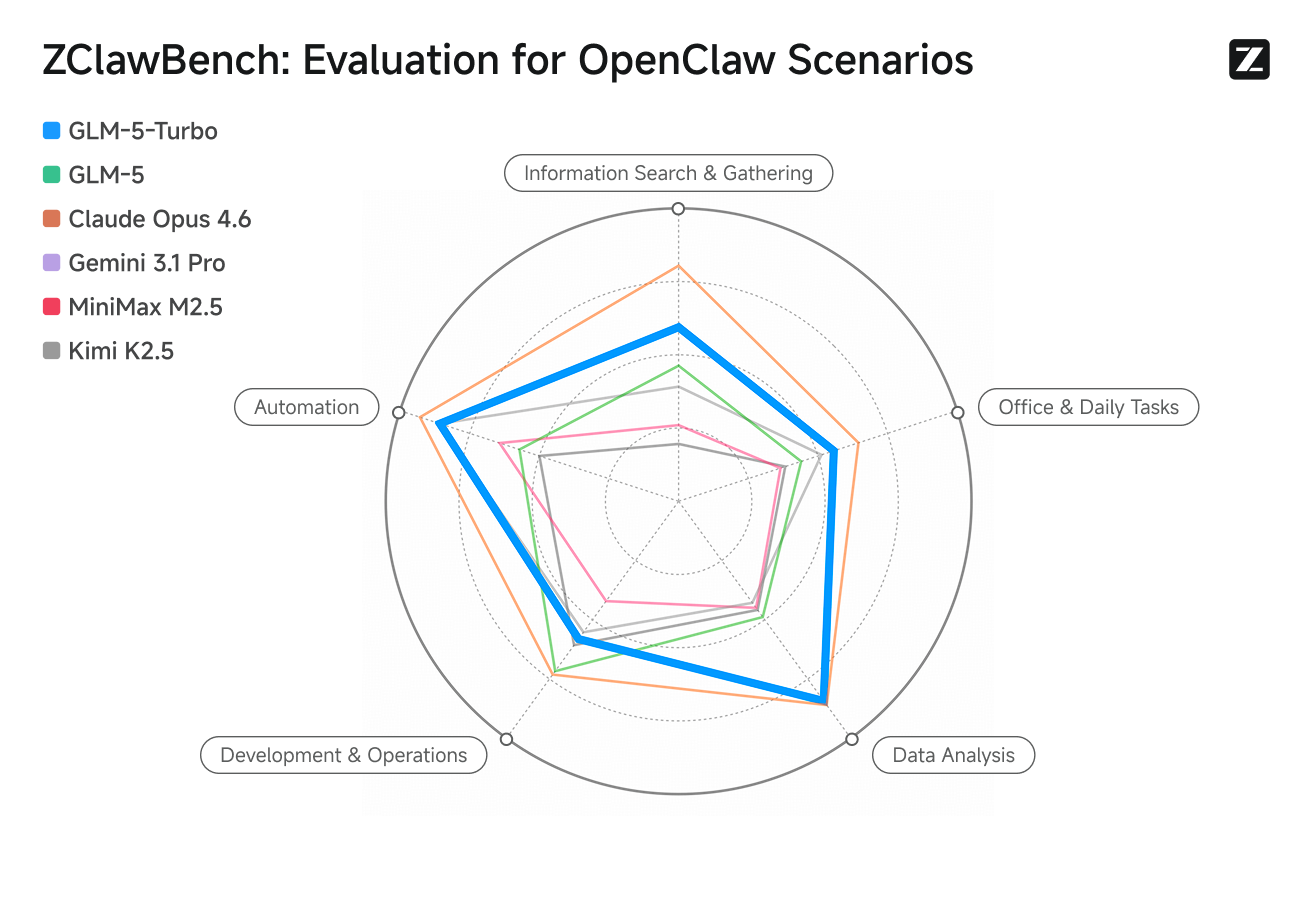

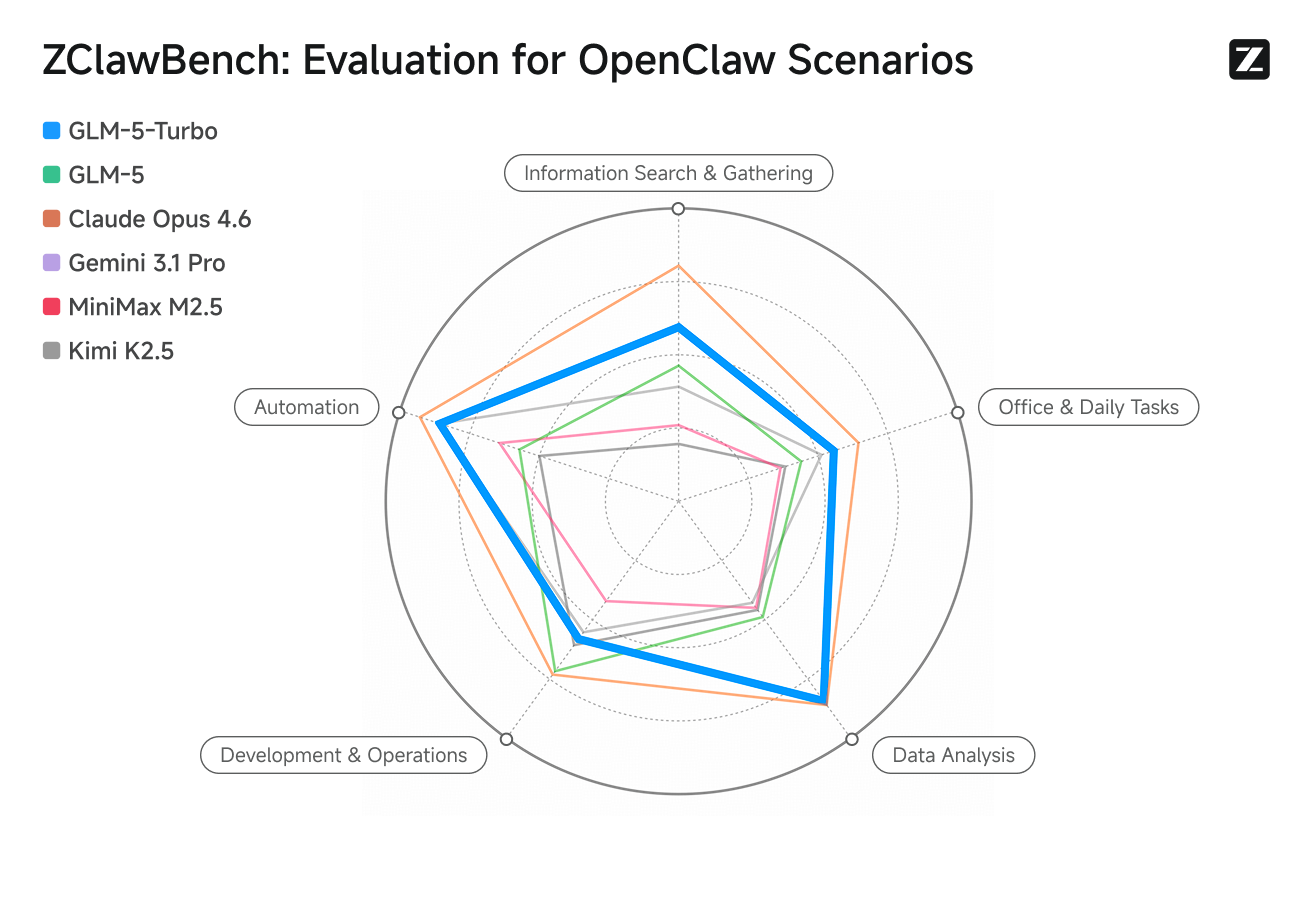

ZClawBench: A Benchmark for the OpenClaw Agent Scenario

With the growing adoption of OpenClaw, evaluating model performance in Openclaw workflows has become a key focus across the industry. Based on extensive analysis of real OpenClaw use cases, we introduce ZClawBench, an end-to-end benchmark designed specifically for agent tasks in the OpenClaw ecosystem.Current OpenClaw workloads span a wide range of task types, including environment setup, software development, information retrieval, data analysis, and content creation. The user base has also expanded beyond early developer adopters to include productivity users, financial professionals, operations engineers, content creators, and research analysts. Meanwhile, the usage of Skills has increased rapidly—from 26% to 45% in a short period of time—highlighting a clear shift toward a more modular and skill-driven agent ecosystem.Benchmark results show that GLM-5-Turbo delivers substantial improvements over GLM-5 in OpenClaw scenarios, outperforming several leading models across multiple key task categories. The ZClawBench dataset and full evaluation trajectories are now publicly available. We welcome the community to validate, reproduce, and further improve the benchmark.

The ZClawBench dataset and full evaluation trajectories are now publicly available. We welcome the community to validate, reproduce, and further improve the benchmark.

The ZClawBench dataset and full evaluation trajectories are now publicly available. We welcome the community to validate, reproduce, and further improve the benchmark.

The ZClawBench dataset and full evaluation trajectories are now publicly available. We welcome the community to validate, reproduce, and further improve the benchmark.Resources

- API Documentation: Learn how to call the API.

- OpenClaw Guide: Learn how to integrate with OpenClaw.

Quick Start

The following is a full sample code to help you onboard GLM-5-Turbo with ease.- cURL

- Official Python SDK

- Official Java SDK

- OpenAI Python SDK

Basic CallStreaming Call